sync

This commit is contained in:

commit

28e717ace4

BIN

doc/yoloserv.odt

Normal file

BIN

doc/yoloserv.odt

Normal file

Binary file not shown.

@ -7,5 +7,5 @@ contact_links:

|

||||

url: https://community.ultralytics.com/

|

||||

about: Ask on Ultralytics Community Forum

|

||||

- name: 🎧 Discord

|

||||

url: https://discord.gg/n6cFeSPZdD

|

||||

url: https://ultralytics.com/discord

|

||||

about: Ask on Ultralytics Discord

|

||||

|

||||

132

downloads/ultralytics-main/.github/workflows/ci.yaml

vendored

132

downloads/ultralytics-main/.github/workflows/ci.yaml

vendored

@ -10,17 +10,30 @@ on:

|

||||

branches: [main]

|

||||

schedule:

|

||||

- cron: '0 0 * * *' # runs at 00:00 UTC every day

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

hub:

|

||||

description: 'Run HUB'

|

||||

default: false

|

||||

type: boolean

|

||||

tests:

|

||||

description: 'Run Tests'

|

||||

default: false

|

||||

type: boolean

|

||||

benchmarks:

|

||||

description: 'Run Benchmarks'

|

||||

default: false

|

||||

type: boolean

|

||||

|

||||

jobs:

|

||||

HUB:

|

||||

if: github.repository == 'ultralytics/ultralytics' && (github.event_name == 'schedule' || github.event_name == 'push')

|

||||

if: github.repository == 'ultralytics/ultralytics' && (github.event_name == 'schedule' || github.event_name == 'push' || (github.event_name == 'workflow_dispatch' && github.event.inputs.hub == 'true'))

|

||||

runs-on: ${{ matrix.os }}

|

||||

strategy:

|

||||

fail-fast: false

|

||||

matrix:

|

||||

os: [ubuntu-latest]

|

||||

python-version: ['3.10']

|

||||

model: [yolov5n]

|

||||

python-version: ['3.11']

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

- uses: actions/setup-python@v4

|

||||

@ -56,8 +69,26 @@ jobs:

|

||||

hub.reset_model(model_id)

|

||||

model = YOLO('https://hub.ultralytics.com/models/' + model_id)

|

||||

model.train()

|

||||

- name: Test HUB inference API

|

||||

shell: python

|

||||

env:

|

||||

API_KEY: ${{ secrets.ULTRALYTICS_HUB_API_KEY }}

|

||||

MODEL_ID: ${{ secrets.ULTRALYTICS_HUB_MODEL_ID }}

|

||||

run: |

|

||||

import os

|

||||

import requests

|

||||

import json

|

||||

api_key, model_id = os.environ['API_KEY'], os.environ['MODEL_ID']

|

||||

url = f"https://api.ultralytics.com/v1/predict/{model_id}"

|

||||

headers = {"x-api-key": api_key}

|

||||

data = {"size": 320, "confidence": 0.25, "iou": 0.45}

|

||||

with open("ultralytics/assets/zidane.jpg", "rb") as f:

|

||||

response = requests.post(url, headers=headers, data=data, files={"image": f})

|

||||

assert response.status_code == 200, f'Status code {response.status_code}, Reason {response.reason}'

|

||||

print(json.dumps(response.json(), indent=2))

|

||||

|

||||

Benchmarks:

|

||||

if: github.event_name != 'workflow_dispatch' || github.event.inputs.benchmarks == 'true'

|

||||

runs-on: ${{ matrix.os }}

|

||||

strategy:

|

||||

fail-fast: false

|

||||

@ -75,12 +106,8 @@ jobs:

|

||||

shell: bash # for Windows compatibility

|

||||

run: |

|

||||

python -m pip install --upgrade pip wheel

|

||||

if [ "${{ matrix.os }}" == "macos-latest" ]; then

|

||||

pip install -e '.[export]' --extra-index-url https://download.pytorch.org/whl/cpu

|

||||

else

|

||||

pip install -e '.[export]' --extra-index-url https://download.pytorch.org/whl/cpu

|

||||

fi

|

||||

yolo export format=tflite imgsz=32

|

||||

pip install -e ".[export]" coverage --extra-index-url https://download.pytorch.org/whl/cpu

|

||||

yolo export format=tflite imgsz=32 || true

|

||||

- name: Check environment

|

||||

run: |

|

||||

echo "RUNNER_OS is ${{ runner.os }}"

|

||||

@ -93,44 +120,44 @@ jobs:

|

||||

pip --version

|

||||

pip list

|

||||

- name: Benchmark DetectionModel

|

||||

shell: python

|

||||

run: |

|

||||

from ultralytics.yolo.utils.benchmarks import benchmark

|

||||

benchmark(model='${{ matrix.model }}.pt', imgsz=160, half=False, hard_fail=0.20)

|

||||

shell: bash

|

||||

run: coverage run -a --source=ultralytics -m ultralytics.cfg.__init__ benchmark model='path with spaces/${{ matrix.model }}.pt' imgsz=160 verbose=0.26

|

||||

- name: Benchmark SegmentationModel

|

||||

shell: python

|

||||

run: |

|

||||

from ultralytics.yolo.utils.benchmarks import benchmark

|

||||

benchmark(model='${{ matrix.model }}-seg.pt', imgsz=160, half=False, hard_fail=0.14)

|

||||

shell: bash

|

||||

run: coverage run -a --source=ultralytics -m ultralytics.cfg.__init__ benchmark model='path with spaces/${{ matrix.model }}-seg.pt' imgsz=160 verbose=0.30

|

||||

- name: Benchmark ClassificationModel

|

||||

shell: python

|

||||

run: |

|

||||

from ultralytics.yolo.utils.benchmarks import benchmark

|

||||

benchmark(model='${{ matrix.model }}-cls.pt', imgsz=160, half=False, hard_fail=0.61)

|

||||

shell: bash

|

||||

run: coverage run -a --source=ultralytics -m ultralytics.cfg.__init__ benchmark model='path with spaces/${{ matrix.model }}-cls.pt' imgsz=160 verbose=0.36

|

||||

- name: Benchmark PoseModel

|

||||

shell: python

|

||||

shell: bash

|

||||

run: coverage run -a --source=ultralytics -m ultralytics.cfg.__init__ benchmark model='path with spaces/${{ matrix.model }}-pose.pt' imgsz=160 verbose=0.17

|

||||

- name: Merge Coverage Reports

|

||||

run: |

|

||||

from ultralytics.yolo.utils.benchmarks import benchmark

|

||||

benchmark(model='${{ matrix.model }}-pose.pt', imgsz=160, half=False, hard_fail=0.0)

|

||||

coverage xml -o coverage-benchmarks.xml

|

||||

- name: Upload Coverage Reports to CodeCov

|

||||

uses: codecov/codecov-action@v3

|

||||

with:

|

||||

flags: Benchmarks

|

||||

env:

|

||||

CODECOV_TOKEN: ${{ secrets.CODECOV_TOKEN }}

|

||||

- name: Benchmark Summary

|

||||

run: |

|

||||

cat benchmarks.log

|

||||

echo "$(cat benchmarks.log)" >> $GITHUB_STEP_SUMMARY

|

||||

|

||||

Tests:

|

||||

if: github.event_name != 'workflow_dispatch' || github.event.inputs.tests == 'true'

|

||||

timeout-minutes: 60

|

||||

runs-on: ${{ matrix.os }}

|

||||

strategy:

|

||||

fail-fast: false

|

||||

matrix:

|

||||

os: [ubuntu-latest]

|

||||

python-version: ['3.7', '3.8', '3.9', '3.10']

|

||||

model: [yolov8n]

|

||||

python-version: ['3.11']

|

||||

torch: [latest]

|

||||

include:

|

||||

- os: ubuntu-latest

|

||||

python-version: '3.8' # torch 1.7.0 requires python >=3.6, <=3.8

|

||||

model: yolov8n

|

||||

python-version: '3.8' # torch 1.8.0 requires python >=3.6, <=3.8

|

||||

torch: '1.8.0' # min torch version CI https://pypi.org/project/torchvision/

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

@ -140,12 +167,12 @@ jobs:

|

||||

cache: 'pip' # caching pip dependencies

|

||||

- name: Install requirements

|

||||

shell: bash # for Windows compatibility

|

||||

run: |

|

||||

run: | # CoreML must be installed before export due to protobuf error from AutoInstall

|

||||

python -m pip install --upgrade pip wheel

|

||||

if [ "${{ matrix.torch }}" == "1.8.0" ]; then

|

||||

pip install -e . torch==1.8.0 torchvision==0.9.0 pytest --extra-index-url https://download.pytorch.org/whl/cpu

|

||||

pip install -e . torch==1.8.0 torchvision==0.9.0 pytest-cov "coremltools>=7.0.b1" --extra-index-url https://download.pytorch.org/whl/cpu

|

||||

else

|

||||

pip install -e . pytest --extra-index-url https://download.pytorch.org/whl/cpu

|

||||

pip install -e . pytest-cov "coremltools>=7.0.b1" --extra-index-url https://download.pytorch.org/whl/cpu

|

||||

fi

|

||||

- name: Check environment

|

||||

run: |

|

||||

@ -158,41 +185,16 @@ jobs:

|

||||

python --version

|

||||

pip --version

|

||||

pip list

|

||||

- name: Test Detect

|

||||

shell: bash # for Windows compatibility

|

||||

run: |

|

||||

yolo detect train data=coco8.yaml model=yolov8n.yaml epochs=1 imgsz=32

|

||||

yolo detect train data=coco8.yaml model=yolov8n.pt epochs=1 imgsz=32

|

||||

yolo detect val data=coco8.yaml model=runs/detect/train/weights/last.pt imgsz=32

|

||||

yolo detect predict model=runs/detect/train/weights/last.pt imgsz=32 source=ultralytics/assets/bus.jpg

|

||||

yolo export model=runs/detect/train/weights/last.pt imgsz=32 format=torchscript

|

||||

- name: Test Segment

|

||||

shell: bash # for Windows compatibility

|

||||

run: |

|

||||

yolo segment train data=coco8-seg.yaml model=yolov8n-seg.yaml epochs=1 imgsz=32

|

||||

yolo segment train data=coco8-seg.yaml model=yolov8n-seg.pt epochs=1 imgsz=32

|

||||

yolo segment val data=coco8-seg.yaml model=runs/segment/train/weights/last.pt imgsz=32

|

||||

yolo segment predict model=runs/segment/train/weights/last.pt imgsz=32 source=ultralytics/assets/bus.jpg

|

||||

yolo export model=runs/segment/train/weights/last.pt imgsz=32 format=torchscript

|

||||

- name: Test Classify

|

||||

shell: bash # for Windows compatibility

|

||||

run: |

|

||||

yolo classify train data=imagenet10 model=yolov8n-cls.yaml epochs=1 imgsz=32

|

||||

yolo classify train data=imagenet10 model=yolov8n-cls.pt epochs=1 imgsz=32

|

||||

yolo classify val data=imagenet10 model=runs/classify/train/weights/last.pt imgsz=32

|

||||

yolo classify predict model=runs/classify/train/weights/last.pt imgsz=32 source=ultralytics/assets/bus.jpg

|

||||

yolo export model=runs/classify/train/weights/last.pt imgsz=32 format=torchscript

|

||||

- name: Test Pose

|

||||

shell: bash # for Windows compatibility

|

||||

run: |

|

||||

yolo pose train data=coco8-pose.yaml model=yolov8n-pose.yaml epochs=1 imgsz=32

|

||||

yolo pose train data=coco8-pose.yaml model=yolov8n-pose.pt epochs=1 imgsz=32

|

||||

yolo pose val data=coco8-pose.yaml model=runs/pose/train/weights/last.pt imgsz=32

|

||||

yolo pose predict model=runs/pose/train/weights/last.pt imgsz=32 source=ultralytics/assets/bus.jpg

|

||||

yolo export model=runs/pose/train/weights/last.pt imgsz=32 format=torchscript

|

||||

- name: Pytest tests

|

||||

shell: bash # for Windows compatibility

|

||||

run: pytest tests

|

||||

run: pytest --cov=ultralytics/ --cov-report xml tests/

|

||||

- name: Upload Coverage Reports to CodeCov

|

||||

if: github.repository == 'ultralytics/ultralytics' && matrix.os == 'ubuntu-latest' && matrix.python-version == '3.11'

|

||||

uses: codecov/codecov-action@v3

|

||||

with:

|

||||

flags: Tests

|

||||

env:

|

||||

CODECOV_TOKEN: ${{ secrets.CODECOV_TOKEN }}

|

||||

|

||||

Summary:

|

||||

runs-on: ubuntu-latest

|

||||

@ -201,7 +203,7 @@ jobs:

|

||||

steps:

|

||||

- name: Check for failure and notify

|

||||

if: (needs.HUB.result == 'failure' || needs.Benchmarks.result == 'failure' || needs.Tests.result == 'failure') && github.repository == 'ultralytics/ultralytics' && (github.event_name == 'schedule' || github.event_name == 'push')

|

||||

uses: slackapi/slack-github-action@v1.23.0

|

||||

uses: slackapi/slack-github-action@v1.24.0

|

||||

with:

|

||||

payload: |

|

||||

{"text": "<!channel> GitHub Actions error for ${{ github.workflow }} ❌\n\n\n*Repository:* https://github.com/${{ github.repository }}\n*Action:* https://github.com/${{ github.repository }}/actions/runs/${{ github.run_id }}\n*Author:* ${{ github.actor }}\n*Event:* ${{ github.event_name }}\n"}

|

||||

|

||||

@ -6,12 +6,47 @@ name: Publish Docker Images

|

||||

on:

|

||||

push:

|

||||

branches: [main]

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

dockerfile:

|

||||

type: choice

|

||||

description: Select Dockerfile

|

||||

options:

|

||||

- Dockerfile-arm64

|

||||

- Dockerfile-jetson

|

||||

- Dockerfile-python

|

||||

- Dockerfile-cpu

|

||||

- Dockerfile

|

||||

push:

|

||||

type: boolean

|

||||

description: Push image to Docker Hub

|

||||

default: true

|

||||

|

||||

jobs:

|

||||

docker:

|

||||

if: github.repository == 'ultralytics/ultralytics'

|

||||

name: Push Docker image to Docker Hub

|

||||

name: Push

|

||||

runs-on: ubuntu-latest

|

||||

strategy:

|

||||

fail-fast: false

|

||||

max-parallel: 5

|

||||

matrix:

|

||||

include:

|

||||

- dockerfile: "Dockerfile-arm64"

|

||||

tags: "latest-arm64"

|

||||

platforms: "linux/arm64"

|

||||

- dockerfile: "Dockerfile-jetson"

|

||||

tags: "latest-jetson"

|

||||

platforms: "linux/arm64"

|

||||

- dockerfile: "Dockerfile-python"

|

||||

tags: "latest-python"

|

||||

platforms: "linux/amd64"

|

||||

- dockerfile: "Dockerfile-cpu"

|

||||

tags: "latest-cpu"

|

||||

platforms: "linux/amd64"

|

||||

- dockerfile: "Dockerfile"

|

||||

tags: "latest"

|

||||

platforms: "linux/amd64"

|

||||

steps:

|

||||

- name: Checkout repo

|

||||

uses: actions/checkout@v3

|

||||

@ -28,40 +63,66 @@ jobs:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||

|

||||

- name: Build and push arm64 image

|

||||

uses: docker/build-push-action@v4

|

||||

continue-on-error: true

|

||||

with:

|

||||

context: .

|

||||

platforms: linux/arm64

|

||||

file: docker/Dockerfile-arm64

|

||||

push: true

|

||||

tags: ultralytics/ultralytics:latest-arm64

|

||||

- name: Retrieve Ultralytics version

|

||||

id: get_version

|

||||

run: |

|

||||

VERSION=$(grep "^__version__ =" ultralytics/__init__.py | awk -F"'" '{print $2}')

|

||||

echo "Retrieved Ultralytics version: $VERSION"

|

||||

echo "version=$VERSION" >> $GITHUB_OUTPUT

|

||||

|

||||

- name: Build and push Jetson image

|

||||

uses: docker/build-push-action@v4

|

||||

continue-on-error: true

|

||||

with:

|

||||

context: .

|

||||

platforms: linux/arm64

|

||||

file: docker/Dockerfile-jetson

|

||||

push: true

|

||||

tags: ultralytics/ultralytics:latest-jetson

|

||||

VERSION_TAG=$(echo "${{ matrix.tags }}" | sed "s/latest/${VERSION}/")

|

||||

echo "Intended version tag: $VERSION_TAG"

|

||||

echo "version_tag=$VERSION_TAG" >> $GITHUB_OUTPUT

|

||||

|

||||

- name: Build and push CPU image

|

||||

uses: docker/build-push-action@v4

|

||||

continue-on-error: true

|

||||

with:

|

||||

context: .

|

||||

file: docker/Dockerfile-cpu

|

||||

push: true

|

||||

tags: ultralytics/ultralytics:latest-cpu

|

||||

- name: Check if version tag exists on DockerHub

|

||||

id: check_tag

|

||||

run: |

|

||||

RESPONSE=$(curl -s https://hub.docker.com/v2/repositories/ultralytics/ultralytics/tags/$VERSION_TAG)

|

||||

MESSAGE=$(echo $RESPONSE | jq -r '.message')

|

||||

if [[ "$MESSAGE" == "null" ]]; then

|

||||

echo "Tag $VERSION_TAG already exists on DockerHub."

|

||||

echo "exists=true" >> $GITHUB_OUTPUT

|

||||

elif [[ "$MESSAGE" == *"404"* ]]; then

|

||||

echo "Tag $VERSION_TAG does not exist on DockerHub."

|

||||

echo "exists=false" >> $GITHUB_OUTPUT

|

||||

else

|

||||

echo "Unexpected response from DockerHub. Please check manually."

|

||||

echo "exists=false" >> $GITHUB_OUTPUT

|

||||

fi

|

||||

env:

|

||||

VERSION_TAG: ${{ steps.get_version.outputs.version_tag }}

|

||||

|

||||

- name: Build and push GPU image

|

||||

uses: docker/build-push-action@v4

|

||||

continue-on-error: true

|

||||

- name: Build Image

|

||||

if: github.event_name == 'push' || github.event.inputs.dockerfile == matrix.dockerfile

|

||||

run: |

|

||||

docker build --platform ${{ matrix.platforms }} -f docker/${{ matrix.dockerfile }} \

|

||||

-t ultralytics/ultralytics:${{ matrix.tags }} \

|

||||

-t ultralytics/ultralytics:${{ steps.get_version.outputs.version_tag }} .

|

||||

|

||||

- name: Run Tests

|

||||

if: (github.event_name == 'push' || github.event.inputs.dockerfile == matrix.dockerfile) && matrix.platforms == 'linux/amd64' # arm64 images not supported on GitHub CI runners

|

||||

run: docker run ultralytics/ultralytics:${{ matrix.tags }} /bin/bash -c "pip install pytest && pytest tests"

|

||||

|

||||

- name: Run Benchmarks

|

||||

# WARNING: Dockerfile (GPU) error on TF.js export 'module 'numpy' has no attribute 'object'.

|

||||

if: (github.event_name == 'push' || github.event.inputs.dockerfile == matrix.dockerfile) && matrix.platforms == 'linux/amd64' && matrix.dockerfile != 'Dockerfile' # arm64 images not supported on GitHub CI runners

|

||||

run: docker run ultralytics/ultralytics:${{ matrix.tags }} yolo benchmark model=yolov8n.pt imgsz=160 verbose=0.26

|

||||

|

||||

- name: Push Docker Image with Ultralytics version tag

|

||||

if: (github.event_name == 'push' || (github.event.inputs.dockerfile == matrix.dockerfile && github.event.inputs.push == 'true')) && steps.check_tag.outputs.exists == 'false'

|

||||

run: |

|

||||

docker push ultralytics/ultralytics:${{ steps.get_version.outputs.version_tag }}

|

||||

|

||||

- name: Push Docker Image with latest tag

|

||||

if: github.event_name == 'push' || (github.event.inputs.dockerfile == matrix.dockerfile && github.event.inputs.push == 'true')

|

||||

run: |

|

||||

docker push ultralytics/ultralytics:${{ matrix.tags }}

|

||||

|

||||

- name: Notify on failure

|

||||

if: github.event_name == 'push' && failure() # do not notify on cancelled() as cancelling is performed by hand

|

||||

uses: slackapi/slack-github-action@v1.24.0

|

||||

with:

|

||||

context: .

|

||||

file: docker/Dockerfile

|

||||

push: true

|

||||

tags: ultralytics/ultralytics:latest

|

||||

payload: |

|

||||

{"text": "<!channel> GitHub Actions error for ${{ github.workflow }} ❌\n\n\n*Repository:* https://github.com/${{ github.repository }}\n*Action:* https://github.com/${{ github.repository }}/actions/runs/${{ github.run_id }}\n*Author:* ${{ github.actor }}\n*Event:* ${{ github.event_name }}\n"}

|

||||

env:

|

||||

SLACK_WEBHOOK_URL: ${{ secrets.SLACK_WEBHOOK_URL_YOLO }}

|

||||

|

||||

@ -32,9 +32,11 @@ jobs:

|

||||

|

||||

If this is a custom training ❓ Question, please provide as much information as possible, including dataset image examples and training logs, and verify you are following our [Tips for Best Training Results](https://docs.ultralytics.com/yolov5/tutorials/tips_for_best_training_results/).

|

||||

|

||||

Join the vibrant [Ultralytics Discord](https://ultralytics.com/discord) 🎧 community for real-time conversations and collaborations. This platform offers a perfect space to inquire, showcase your work, and connect with fellow Ultralytics users.

|

||||

|

||||

## Install

|

||||

|

||||

Pip install the `ultralytics` package including all [requirements](https://github.com/ultralytics/ultralytics/blob/main/requirements.txt) in a [**Python>=3.7**](https://www.python.org/) environment with [**PyTorch>=1.7**](https://pytorch.org/get-started/locally/).

|

||||

Pip install the `ultralytics` package including all [requirements](https://github.com/ultralytics/ultralytics/blob/main/requirements.txt) in a [**Python>=3.8**](https://www.python.org/) environment with [**PyTorch>=1.8**](https://pytorch.org/get-started/locally/).

|

||||

|

||||

```bash

|

||||

pip install ultralytics

|

||||

|

||||

@ -28,7 +28,7 @@ jobs:

|

||||

timeout_minutes: 5

|

||||

retry_wait_seconds: 60

|

||||

max_attempts: 3

|

||||

command: lychee --accept 429,999 --exclude-loopback --exclude 'https?://(www\.)?(twitter\.com|instagram\.com)' --exclude-path '**/ci.yaml' --exclude-mail --github-token ${{ secrets.GITHUB_TOKEN }} './**/*.md' './**/*.html'

|

||||

command: lychee --accept 429,999 --exclude-loopback --exclude 'https?://(www\.)?(linkedin\.com|twitter\.com|instagram\.com)' --exclude-path '**/ci.yaml' --exclude-mail --github-token ${{ secrets.GITHUB_TOKEN }} './**/*.md' './**/*.html'

|

||||

|

||||

- name: Test Markdown, HTML, YAML, Python and Notebook links with retry

|

||||

if: github.event_name == 'workflow_dispatch'

|

||||

@ -37,4 +37,4 @@ jobs:

|

||||

timeout_minutes: 5

|

||||

retry_wait_seconds: 60

|

||||

max_attempts: 3

|

||||

command: lychee --accept 429,999 --exclude-loopback --exclude 'https?://(www\.)?(twitter\.com|instagram\.com|url\.com)' --exclude-path '**/ci.yaml' --exclude-mail --github-token ${{ secrets.GITHUB_TOKEN }} './**/*.md' './**/*.html' './**/*.yml' './**/*.yaml' './**/*.py' './**/*.ipynb'

|

||||

command: lychee --accept 429,999 --exclude-loopback --exclude 'https?://(www\.)?(linkedin\.com|twitter\.com|instagram\.com|url\.com)' --exclude-path '**/ci.yaml' --exclude-mail --github-token ${{ secrets.GITHUB_TOKEN }} './**/*.md' './**/*.html' './**/*.yml' './**/*.yaml' './**/*.py' './**/*.ipynb'

|

||||

|

||||

@ -33,14 +33,14 @@ jobs:

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

python -m pip install --upgrade pip wheel build twine

|

||||

pip install -e '.[dev]' --extra-index-url https://download.pytorch.org/whl/cpu

|

||||

pip install -e ".[dev]" --extra-index-url https://download.pytorch.org/whl/cpu

|

||||

- name: Check PyPI version

|

||||

shell: python

|

||||

run: |

|

||||

import os

|

||||

import pkg_resources as pkg

|

||||

import ultralytics

|

||||

from ultralytics.yolo.utils.checks import check_latest_pypi_version

|

||||

from ultralytics.utils.checks import check_latest_pypi_version

|

||||

|

||||

v_local = pkg.parse_version(ultralytics.__version__).release

|

||||

v_pypi = pkg.parse_version(check_latest_pypi_version()).release

|

||||

@ -63,7 +63,7 @@ jobs:

|

||||

python -m twine upload dist/* -u __token__ -p $PYPI_TOKEN

|

||||

- name: Deploy Docs

|

||||

continue-on-error: true

|

||||

if: (github.event_name == 'push' && steps.check_pypi.outputs.increment == 'True') || github.event.inputs.docs == 'true'

|

||||

if: (github.event_name == 'push' || github.event.inputs.docs == 'true') && github.repository == 'ultralytics/ultralytics' && github.actor == 'glenn-jocher'

|

||||

env:

|

||||

PERSONAL_ACCESS_TOKEN: ${{ secrets.PERSONAL_ACCESS_TOKEN }}

|

||||

run: |

|

||||

@ -94,7 +94,7 @@ jobs:

|

||||

echo "PR_TITLE=$PR_TITLE" >> $GITHUB_ENV

|

||||

- name: Notify on Slack (Success)

|

||||

if: success() && github.event_name == 'push' && steps.check_pypi.outputs.increment == 'True'

|

||||

uses: slackapi/slack-github-action@v1.23.0

|

||||

uses: slackapi/slack-github-action@v1.24.0

|

||||

with:

|

||||

payload: |

|

||||

{"text": "<!channel> GitHub Actions success for ${{ github.workflow }} ✅\n\n\n*Repository:* https://github.com/${{ github.repository }}\n*Action:* https://github.com/${{ github.repository }}/actions/runs/${{ github.run_id }}\n*Author:* ${{ github.actor }}\n*Event:* NEW 'ultralytics ${{ steps.check_pypi.outputs.version }}' pip package published 😃\n*Job Status:* ${{ job.status }}\n*Pull Request:* <https://github.com/${{ github.repository }}/pull/${{ env.PR_NUMBER }}> ${{ env.PR_TITLE }}\n"}

|

||||

@ -102,7 +102,7 @@ jobs:

|

||||

SLACK_WEBHOOK_URL: ${{ secrets.SLACK_WEBHOOK_URL_YOLO }}

|

||||

- name: Notify on Slack (Failure)

|

||||

if: failure()

|

||||

uses: slackapi/slack-github-action@v1.23.0

|

||||

uses: slackapi/slack-github-action@v1.24.0

|

||||

with:

|

||||

payload: |

|

||||

{"text": "<!channel> GitHub Actions error for ${{ github.workflow }} ❌\n\n\n*Repository:* https://github.com/${{ github.repository }}\n*Action:* https://github.com/${{ github.repository }}/actions/runs/${{ github.run_id }}\n*Author:* ${{ github.actor }}\n*Event:* ${{ github.event_name }}\n*Job Status:* ${{ job.status }}\n*Pull Request:* <https://github.com/${{ github.repository }}/pull/${{ env.PR_NUMBER }}> ${{ env.PR_TITLE }}\n"}

|

||||

|

||||

9

downloads/ultralytics-main/.gitignore

vendored

9

downloads/ultralytics-main/.gitignore

vendored

@ -118,11 +118,15 @@ venv.bak/

|

||||

.spyderproject

|

||||

.spyproject

|

||||

|

||||

# VSCode project settings

|

||||

.vscode/

|

||||

|

||||

# Rope project settings

|

||||

.ropeproject

|

||||

|

||||

# mkdocs documentation

|

||||

/site

|

||||

mkdocs_github_authors.yaml

|

||||

|

||||

# mypy

|

||||

.mypy_cache/

|

||||

@ -136,7 +140,6 @@ dmypy.json

|

||||

datasets/

|

||||

runs/

|

||||

wandb/

|

||||

|

||||

.DS_Store

|

||||

|

||||

# Neural Network weights -----------------------------------------------------------------------------------------------

|

||||

@ -147,6 +150,7 @@ weights/

|

||||

*.onnx

|

||||

*.engine

|

||||

*.mlmodel

|

||||

*.mlpackage

|

||||

*.torchscript

|

||||

*.tflite

|

||||

*.h5

|

||||

@ -154,3 +158,6 @@ weights/

|

||||

*_web_model/

|

||||

*_openvino_model/

|

||||

*_paddle_model/

|

||||

|

||||

# Autogenerated files for tests

|

||||

/ultralytics/assets/

|

||||

|

||||

@ -22,7 +22,7 @@ repos:

|

||||

- id: detect-private-key

|

||||

|

||||

- repo: https://github.com/asottile/pyupgrade

|

||||

rev: v3.3.2

|

||||

rev: v3.10.1

|

||||

hooks:

|

||||

- id: pyupgrade

|

||||

name: Upgrade code

|

||||

@ -34,7 +34,7 @@ repos:

|

||||

name: Sort imports

|

||||

|

||||

- repo: https://github.com/google/yapf

|

||||

rev: v0.33.0

|

||||

rev: v0.40.0

|

||||

hooks:

|

||||

- id: yapf

|

||||

name: YAPF formatting

|

||||

@ -50,17 +50,17 @@ repos:

|

||||

# exclude: "README.md|README.zh-CN.md|CONTRIBUTING.md"

|

||||

|

||||

- repo: https://github.com/PyCQA/flake8

|

||||

rev: 6.0.0

|

||||

rev: 6.1.0

|

||||

hooks:

|

||||

- id: flake8

|

||||

name: PEP8

|

||||

|

||||

- repo: https://github.com/codespell-project/codespell

|

||||

rev: v2.2.4

|

||||

rev: v2.2.5

|

||||

hooks:

|

||||

- id: codespell

|

||||

args:

|

||||

- --ignore-words-list=crate,nd,strack,dota

|

||||

- --ignore-words-list=crate,nd,strack,dota,ane,segway,fo

|

||||

|

||||

# - repo: https://github.com/asottile/yesqa

|

||||

# rev: v1.4.0

|

||||

|

||||

@ -65,7 +65,7 @@ Here is an example:

|

||||

|

||||

```python

|

||||

"""

|

||||

What the function does. Performs NMS on given detection predictions.

|

||||

What the function does. Performs NMS on given detection predictions.

|

||||

|

||||

Args:

|

||||

arg1: The description of the 1st argument

|

||||

|

||||

@ -9,6 +9,7 @@

|

||||

|

||||

<div>

|

||||

<a href="https://github.com/ultralytics/ultralytics/actions/workflows/ci.yaml"><img src="https://github.com/ultralytics/ultralytics/actions/workflows/ci.yaml/badge.svg" alt="Ultralytics CI"></a>

|

||||

<a href="https://codecov.io/github/ultralytics/ultralytics"><img src="https://codecov.io/github/ultralytics/ultralytics/branch/main/graph/badge.svg?token=HHW7IIVFVY" alt="Ultralytics Code Coverage"></a>

|

||||

<a href="https://zenodo.org/badge/latestdoi/264818686"><img src="https://zenodo.org/badge/264818686.svg" alt="YOLOv8 Citation"></a>

|

||||

<a href="https://hub.docker.com/r/ultralytics/ultralytics"><img src="https://img.shields.io/docker/pulls/ultralytics/ultralytics?logo=docker" alt="Docker Pulls"></a>

|

||||

<br>

|

||||

@ -20,7 +21,7 @@

|

||||

|

||||

[Ultralytics](https://ultralytics.com) [YOLOv8](https://github.com/ultralytics/ultralytics) is a cutting-edge, state-of-the-art (SOTA) model that builds upon the success of previous YOLO versions and introduces new features and improvements to further boost performance and flexibility. YOLOv8 is designed to be fast, accurate, and easy to use, making it an excellent choice for a wide range of object detection and tracking, instance segmentation, image classification and pose estimation tasks.

|

||||

|

||||

We hope that the resources here will help you get the most out of YOLOv8. Please browse the YOLOv8 <a href="https://docs.ultralytics.com/">Docs</a> for details, raise an issue on <a href="https://github.com/ultralytics/ultralytics/issues/new/choose">GitHub</a> for support, and join our <a href="https://discord.gg/n6cFeSPZdD">Discord</a> community for questions and discussions!

|

||||

We hope that the resources here will help you get the most out of YOLOv8. Please browse the YOLOv8 <a href="https://docs.ultralytics.com/">Docs</a> for details, raise an issue on <a href="https://github.com/ultralytics/ultralytics/issues/new/choose">GitHub</a> for support, and join our <a href="https://ultralytics.com/discord">Discord</a> community for questions and discussions!

|

||||

|

||||

To request an Enterprise License please complete the form at [Ultralytics Licensing](https://ultralytics.com/license).

|

||||

|

||||

@ -30,7 +31,7 @@ To request an Enterprise License please complete the form at [Ultralytics Licens

|

||||

<a href="https://github.com/ultralytics" style="text-decoration:none;">

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-github.png" width="2%" alt="" /></a>

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="2%" alt="" />

|

||||

<a href="https://www.linkedin.com/company/ultralytics" style="text-decoration:none;">

|

||||

<a href="https://www.linkedin.com/company/ultralytics/" style="text-decoration:none;">

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-linkedin.png" width="2%" alt="" /></a>

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="2%" alt="" />

|

||||

<a href="https://twitter.com/ultralytics" style="text-decoration:none;">

|

||||

@ -45,7 +46,7 @@ To request an Enterprise License please complete the form at [Ultralytics Licens

|

||||

<a href="https://www.instagram.com/ultralytics/" style="text-decoration:none;">

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-instagram.png" width="2%" alt="" /></a>

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="2%" alt="" />

|

||||

<a href="https://discord.gg/n6cFeSPZdD" style="text-decoration:none;">

|

||||

<a href="https://ultralytics.com/discord" style="text-decoration:none;">

|

||||

<img src="https://github.com/ultralytics/assets/blob/main/social/logo-social-discord.png" width="2%" alt="" /></a>

|

||||

</div>

|

||||

</div>

|

||||

@ -57,12 +58,16 @@ See below for a quickstart installation and usage example, and see the [YOLOv8 D

|

||||

<details open>

|

||||

<summary>Install</summary>

|

||||

|

||||

Pip install the ultralytics package including all [requirements](https://github.com/ultralytics/ultralytics/blob/main/requirements.txt) in a [**Python>=3.7**](https://www.python.org/) environment with [**PyTorch>=1.7**](https://pytorch.org/get-started/locally/).

|

||||

Pip install the ultralytics package including all [requirements](https://github.com/ultralytics/ultralytics/blob/main/requirements.txt) in a [**Python>=3.8**](https://www.python.org/) environment with [**PyTorch>=1.8**](https://pytorch.org/get-started/locally/).

|

||||

|

||||

[](https://badge.fury.io/py/ultralytics) [](https://pepy.tech/project/ultralytics)

|

||||

|

||||

```bash

|

||||

pip install ultralytics

|

||||

```

|

||||

|

||||

For alternative installation methods including [Conda](https://anaconda.org/conda-forge/ultralytics), [Docker](https://hub.docker.com/r/ultralytics/ultralytics), and Git, please refer to the [Quickstart Guide](https://docs.ultralytics.com/quickstart).

|

||||

|

||||

</details>

|

||||

|

||||

<details open>

|

||||

@ -93,18 +98,20 @@ model = YOLO("yolov8n.pt") # load a pretrained model (recommended for training)

|

||||

model.train(data="coco128.yaml", epochs=3) # train the model

|

||||

metrics = model.val() # evaluate model performance on the validation set

|

||||

results = model("https://ultralytics.com/images/bus.jpg") # predict on an image

|

||||

success = model.export(format="onnx") # export the model to ONNX format

|

||||

path = model.export(format="onnx") # export the model to ONNX format

|

||||

```

|

||||

|

||||

[Models](https://github.com/ultralytics/ultralytics/tree/main/ultralytics/models) download automatically from the latest Ultralytics [release](https://github.com/ultralytics/assets/releases). See YOLOv8 [Python Docs](https://docs.ultralytics.com/usage/python) for more examples.

|

||||

[Models](https://github.com/ultralytics/ultralytics/tree/main/ultralytics/cfg/models) download automatically from the latest Ultralytics [release](https://github.com/ultralytics/assets/releases). See YOLOv8 [Python Docs](https://docs.ultralytics.com/usage/python) for more examples.

|

||||

|

||||

</details>

|

||||

|

||||

## <div align="center">Models</div>

|

||||

|

||||

All YOLOv8 pretrained models are available here. Detect, Segment and Pose models are pretrained on the [COCO](https://github.com/ultralytics/ultralytics/blob/main/ultralytics/datasets/coco.yaml) dataset, while Classify models are pretrained on the [ImageNet](https://github.com/ultralytics/ultralytics/blob/main/ultralytics/datasets/ImageNet.yaml) dataset.

|

||||

YOLOv8 [Detect](https://docs.ultralytics.com/tasks/detect), [Segment](https://docs.ultralytics.com/tasks/segment) and [Pose](https://docs.ultralytics.com/tasks/pose) models pretrained on the [COCO](https://docs.ultralytics.com/datasets/detect/coco) dataset are available here, as well as YOLOv8 [Classify](https://docs.ultralytics.com/tasks/classify) models pretrained on the [ImageNet](https://docs.ultralytics.com/datasets/classify/imagenet) dataset. [Track](https://docs.ultralytics.com/modes/track) mode is available for all Detect, Segment and Pose models.

|

||||

|

||||

[Models](https://github.com/ultralytics/ultralytics/tree/main/ultralytics/models) download automatically from the latest Ultralytics [release](https://github.com/ultralytics/assets/releases) on first use.

|

||||

<img width="1024" src="https://raw.githubusercontent.com/ultralytics/assets/main/im/banner-tasks.png">

|

||||

|

||||

All [Models](https://github.com/ultralytics/ultralytics/tree/main/ultralytics/cfg/models) download automatically from the latest Ultralytics [release](https://github.com/ultralytics/assets/releases) on first use.

|

||||

|

||||

<details open><summary>Detection</summary>

|

||||

|

||||

@ -186,6 +193,8 @@ See [Pose Docs](https://docs.ultralytics.com/tasks/pose) for usage examples with

|

||||

|

||||

## <div align="center">Integrations</div>

|

||||

|

||||

Our key integrations with leading AI platforms extend the functionality of Ultralytics' offerings, enhancing tasks like dataset labeling, training, visualization, and model management. Discover how Ultralytics, in collaboration with [Roboflow](https://roboflow.com/?ref=ultralytics), ClearML, [Comet](https://bit.ly/yolov8-readme-comet), Neural Magic and [OpenVINO](https://docs.ultralytics.com/integrations/openvino), can optimize your AI workflow.

|

||||

|

||||

<br>

|

||||

<a href="https://bit.ly/ultralytics_hub" target="_blank">

|

||||

<img width="100%" src="https://github.com/ultralytics/assets/raw/main/yolov8/banner-integrations.png"></a>

|

||||

@ -228,21 +237,21 @@ We love your input! YOLOv5 and YOLOv8 would not be possible without help from ou

|

||||

|

||||

## <div align="center">License</div>

|

||||

|

||||

YOLOv8 is available under two different licenses:

|

||||

Ultralytics offers two licensing options to accommodate diverse use cases:

|

||||

|

||||

- **AGPL-3.0 License**: See [LICENSE](https://github.com/ultralytics/ultralytics/blob/main/LICENSE) file for details.

|

||||

- **Enterprise License**: Provides greater flexibility for commercial product development without the open-source requirements of AGPL-3.0. Typical use cases are embedding Ultralytics software and AI models in commercial products and applications. Request an Enterprise License at [Ultralytics Licensing](https://ultralytics.com/license).

|

||||

- **AGPL-3.0 License**: This [OSI-approved](https://opensource.org/licenses/) open-source license is ideal for students and enthusiasts, promoting open collaboration and knowledge sharing. See the [LICENSE](https://github.com/ultralytics/ultralytics/blob/main/LICENSE) file for more details.

|

||||

- **Enterprise License**: Designed for commercial use, this license permits seamless integration of Ultralytics software and AI models into commercial goods and services, bypassing the open-source requirements of AGPL-3.0. If your scenario involves embedding our solutions into a commercial offering, reach out through [Ultralytics Licensing](https://ultralytics.com/license).

|

||||

|

||||

## <div align="center">Contact</div>

|

||||

|

||||

For YOLOv8 bug reports and feature requests please visit [GitHub Issues](https://github.com/ultralytics/ultralytics/issues), and join our [Discord](https://discord.gg/n6cFeSPZdD) community for questions and discussions!

|

||||

For Ultralytics bug reports and feature requests please visit [GitHub Issues](https://github.com/ultralytics/ultralytics/issues), and join our [Discord](https://ultralytics.com/discord) community for questions and discussions!

|

||||

|

||||

<br>

|

||||

<div align="center">

|

||||

<a href="https://github.com/ultralytics" style="text-decoration:none;">

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-github.png" width="3%" alt="" /></a>

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="3%" alt="" />

|

||||

<a href="https://www.linkedin.com/company/ultralytics" style="text-decoration:none;">

|

||||

<a href="https://www.linkedin.com/company/ultralytics/" style="text-decoration:none;">

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-linkedin.png" width="3%" alt="" /></a>

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="3%" alt="" />

|

||||

<a href="https://twitter.com/ultralytics" style="text-decoration:none;">

|

||||

@ -257,6 +266,6 @@ For YOLOv8 bug reports and feature requests please visit [GitHub Issues](https:/

|

||||

<a href="https://www.instagram.com/ultralytics/" style="text-decoration:none;">

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-instagram.png" width="3%" alt="" /></a>

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="3%" alt="" />

|

||||

<a href="https://discord.gg/n6cFeSPZdD" style="text-decoration:none;">

|

||||

<a href="https://ultralytics.com/discord" style="text-decoration:none;">

|

||||

<img src="https://github.com/ultralytics/assets/blob/main/social/logo-social-discord.png" width="3%" alt="" /></a>

|

||||

</div>

|

||||

|

||||

@ -9,6 +9,7 @@

|

||||

|

||||

<div>

|

||||

<a href="https://github.com/ultralytics/ultralytics/actions/workflows/ci.yaml"><img src="https://github.com/ultralytics/ultralytics/actions/workflows/ci.yaml/badge.svg" alt="Ultralytics CI"></a>

|

||||

<a href="https://codecov.io/github/ultralytics/ultralytics"><img src="https://codecov.io/github/ultralytics/ultralytics/branch/main/graph/badge.svg?token=HHW7IIVFVY" alt="Ultralytics Code Coverage"></a>

|

||||

<a href="https://zenodo.org/badge/latestdoi/264818686"><img src="https://zenodo.org/badge/264818686.svg" alt="YOLOv8 Citation"></a>

|

||||

<a href="https://hub.docker.com/r/ultralytics/ultralytics"><img src="https://img.shields.io/docker/pulls/ultralytics/ultralytics?logo=docker" alt="Docker Pulls"></a>

|

||||

<br>

|

||||

@ -20,7 +21,7 @@

|

||||

|

||||

[Ultralytics](https://ultralytics.com) [YOLOv8](https://github.com/ultralytics/ultralytics) 是一款前沿、最先进(SOTA)的模型,基于先前 YOLO 版本的成功,引入了新功能和改进,进一步提升性能和灵活性。YOLOv8 设计快速、准确且易于使用,使其成为各种物体检测与跟踪、实例分割、图像分类和姿态估计任务的绝佳选择。

|

||||

|

||||

我们希望这里的资源能帮助您充分利用 YOLOv8。请浏览 YOLOv8 <a href="https://docs.ultralytics.com/">文档</a> 了解详细信息,在 <a href="https://github.com/ultralytics/ultralytics/issues/new/choose">GitHub</a> 上提交问题以获得支持,并加入我们的 <a href="https://discord.gg/n6cFeSPZdD">Discord</a> 社区进行问题和讨论!

|

||||

我们希望这里的资源能帮助您充分利用 YOLOv8。请浏览 YOLOv8 <a href="https://docs.ultralytics.com/">文档</a> 了解详细信息,在 <a href="https://github.com/ultralytics/ultralytics/issues/new/choose">GitHub</a> 上提交问题以获得支持,并加入我们的 <a href="https://ultralytics.com/discord">Discord</a> 社区进行问题和讨论!

|

||||

|

||||

如需申请企业许可,请在 [Ultralytics Licensing](https://ultralytics.com/license) 处填写表格

|

||||

|

||||

@ -30,7 +31,7 @@

|

||||

<a href="https://github.com/ultralytics" style="text-decoration:none;">

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-github.png" width="2%" alt="" /></a>

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="2%" alt="" />

|

||||

<a href="https://www.linkedin.com/company/ultralytics" style="text-decoration:none;">

|

||||

<a href="https://www.linkedin.com/company/ultralytics/" style="text-decoration:none;">

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-linkedin.png" width="2%" alt="" /></a>

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="2%" alt="" />

|

||||

<a href="https://twitter.com/ultralytics" style="text-decoration:none;">

|

||||

@ -45,7 +46,7 @@

|

||||

<a href="https://www.instagram.com/ultralytics/" style="text-decoration:none;">

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-instagram.png" width="2%" alt="" /></a>

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="2%" alt="" />

|

||||

<a href="https://discord.gg/n6cFeSPZdD" style="text-decoration:none;">

|

||||

<a href="https://ultralytics.com/discord" style="text-decoration:none;">

|

||||

<img src="https://github.com/ultralytics/assets/blob/main/social/logo-social-discord.png" width="2%" alt="" /></a>

|

||||

</div>

|

||||

</div>

|

||||

@ -57,12 +58,16 @@

|

||||

<details open>

|

||||

<summary>安装</summary>

|

||||

|

||||

在一个 [**Python>=3.7**](https://www.python.org/) 环境中,使用 [**PyTorch>=1.7**](https://pytorch.org/get-started/locally/),通过 pip 安装 ultralytics 软件包以及所有[依赖项](https://github.com/ultralytics/ultralytics/blob/main/requirements.txt)。

|

||||

使用Pip在一个[**Python>=3.8**](https://www.python.org/)环境中安装`ultralytics`包,此环境还需包含[**PyTorch>=1.8**](https://pytorch.org/get-started/locally/)。这也会安装所有必要的[依赖项](https://github.com/ultralytics/ultralytics/blob/main/requirements.txt)。

|

||||

|

||||

[](https://badge.fury.io/py/ultralytics) [](https://pepy.tech/project/ultralytics)

|

||||

|

||||

```bash

|

||||

pip install ultralytics

|

||||

```

|

||||

|

||||

如需使用包括[Conda](https://anaconda.org/conda-forge/ultralytics)、[Docker](https://hub.docker.com/r/ultralytics/ultralytics)和Git在内的其他安装方法,请参考[快速入门指南](https://docs.ultralytics.com/quickstart)。

|

||||

|

||||

</details>

|

||||

|

||||

<details open>

|

||||

@ -96,15 +101,17 @@ results = model("https://ultralytics.com/images/bus.jpg") # 对图像进行预

|

||||

success = model.export(format="onnx") # 将模型导出为 ONNX 格式

|

||||

```

|

||||

|

||||

[模型](https://github.com/ultralytics/ultralytics/tree/main/ultralytics/models) 会自动从最新的 Ultralytics [发布版本](https://github.com/ultralytics/assets/releases)中下载。查看 YOLOv8 [Python 文档](https://docs.ultralytics.com/usage/python)以获取更多示例。

|

||||

[模型](https://github.com/ultralytics/ultralytics/tree/main/ultralytics/cfg/models) 会自动从最新的 Ultralytics [发布版本](https://github.com/ultralytics/assets/releases)中下载。查看 YOLOv8 [Python 文档](https://docs.ultralytics.com/usage/python)以获取更多示例。

|

||||

|

||||

</details>

|

||||

|

||||

## <div align="center">模型</div>

|

||||

|

||||

所有的 YOLOv8 预训练模型都可以在此找到。检测、分割和姿态模型在 [COCO](https://github.com/ultralytics/ultralytics/blob/main/ultralytics/datasets/coco.yaml) 数据集上进行预训练,而分类模型在 [ImageNet](https://github.com/ultralytics/ultralytics/blob/main/ultralytics/datasets/ImageNet.yaml) 数据集上进行预训练。

|

||||

在[COCO](https://docs.ultralytics.com/datasets/detect/coco)数据集上预训练的YOLOv8 [检测](https://docs.ultralytics.com/tasks/detect),[分割](https://docs.ultralytics.com/tasks/segment)和[姿态](https://docs.ultralytics.com/tasks/pose)模型可以在这里找到,以及在[ImageNet](https://docs.ultralytics.com/datasets/classify/imagenet)数据集上预训练的YOLOv8 [分类](https://docs.ultralytics.com/tasks/classify)模型。所有的检测,分割和姿态模型都支持[追踪](https://docs.ultralytics.com/modes/track)模式。

|

||||

|

||||

在首次使用时,[模型](https://github.com/ultralytics/ultralytics/tree/main/ultralytics/models) 会自动从最新的 Ultralytics [发布版本](https://github.com/ultralytics/assets/releases)中下载。

|

||||

<img width="1024" src="https://raw.githubusercontent.com/ultralytics/assets/main/im/banner-tasks.png">

|

||||

|

||||

所有[模型](https://github.com/ultralytics/ultralytics/tree/main/ultralytics/cfg/models)在首次使用时会自动从最新的Ultralytics [发布版本](https://github.com/ultralytics/assets/releases)下载。

|

||||

|

||||

<details open><summary>检测</summary>

|

||||

|

||||

@ -185,6 +192,8 @@ success = model.export(format="onnx") # 将模型导出为 ONNX 格式

|

||||

|

||||

## <div align="center">集成</div>

|

||||

|

||||

我们与领先的AI平台的关键整合扩展了Ultralytics产品的功能,增强了数据集标签化、训练、可视化和模型管理等任务。探索Ultralytics如何与[Roboflow](https://roboflow.com/?ref=ultralytics)、ClearML、[Comet](https://bit.ly/yolov8-readme-comet)、Neural Magic以及[OpenVINO](https://docs.ultralytics.com/integrations/openvino)合作,优化您的AI工作流程。

|

||||

|

||||

<br>

|

||||

<a href="https://bit.ly/ultralytics_hub" target="_blank">

|

||||

<img width="100%" src="https://github.com/ultralytics/assets/raw/main/yolov8/banner-integrations.png"></a>

|

||||

@ -227,21 +236,21 @@ success = model.export(format="onnx") # 将模型导出为 ONNX 格式

|

||||

|

||||

## <div align="center">许可证</div>

|

||||

|

||||

YOLOv8 提供两种不同的许可证:

|

||||

Ultralytics 提供两种许可证选项以适应各种使用场景:

|

||||

|

||||

- **AGPL-3.0 许可证**:详细信息请参阅 [LICENSE](https://github.com/ultralytics/ultralytics/blob/main/LICENSE) 文件。

|

||||

- **企业许可证**:为商业产品开发提供更大的灵活性,无需遵循 AGPL-3.0 的开源要求。典型的用例是将 Ultralytics 软件和 AI 模型嵌入商业产品和应用中。在 [Ultralytics 授权](https://ultralytics.com/license) 处申请企业许可证。

|

||||

- **AGPL-3.0 许可证**:这个[OSI 批准](https://opensource.org/licenses/)的开源许可证非常适合学生和爱好者,可以推动开放的协作和知识分享。请查看[LICENSE](https://github.com/ultralytics/ultralytics/blob/main/LICENSE) 文件以了解更多细节。

|

||||

- **企业许可证**:专为商业用途设计,该许可证允许将 Ultralytics 的软件和 AI 模型无缝集成到商业产品和服务中,从而绕过 AGPL-3.0 的开源要求。如果您的场景涉及将我们的解决方案嵌入到商业产品中,请通过 [Ultralytics Licensing](https://ultralytics.com/license)与我们联系。

|

||||

|

||||

## <div align="center">联系方式</div>

|

||||

|

||||

对于 YOLOv8 的错误报告和功能请求,请访问 [GitHub Issues](https://github.com/ultralytics/ultralytics/issues),并加入我们的 [Discord](https://discord.gg/n6cFeSPZdD) 社区进行问题和讨论!

|

||||

对于 Ultralytics 的错误报告和功能请求,请访问 [GitHub Issues](https://github.com/ultralytics/ultralytics/issues),并加入我们的 [Discord](https://ultralytics.com/discord) 社区进行问题和讨论!

|

||||

|

||||

<br>

|

||||

<div align="center">

|

||||

<a href="https://github.com/ultralytics" style="text-decoration:none;">

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-github.png" width="3%" alt="" /></a>

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="3%" alt="" />

|

||||

<a href="https://www.linkedin.com/company/ultralytics" style="text-decoration:none;">

|

||||

<a href="https://www.linkedin.com/company/ultralytics/" style="text-decoration:none;">

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-linkedin.png" width="3%" alt="" /></a>

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="3%" alt="" />

|

||||

<a href="https://twitter.com/ultralytics" style="text-decoration:none;">

|

||||

@ -255,6 +264,6 @@ YOLOv8 提供两种不同的许可证:

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="3%" alt="" />

|

||||

<a href="https://www.instagram.com/ultralytics/" style="text-decoration:none;">

|

||||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-instagram.png" width="3%" alt="" /></a>

|

||||

<a href="https://discord.gg/n6cFeSPZdD" style="text-decoration:none;">

|

||||

<a href="https://ultralytics.com/discord" style="text-decoration:none;">

|

||||

<img src="https://github.com/ultralytics/assets/blob/main/social/logo-social-discord.png" width="3%" alt="" /></a>

|

||||

</div>

|

||||

|

||||

@ -3,15 +3,16 @@

|

||||

# Image is CUDA-optimized for YOLOv8 single/multi-GPU training and inference

|

||||

|

||||

# Start FROM PyTorch image https://hub.docker.com/r/pytorch/pytorch or nvcr.io/nvidia/pytorch:23.03-py3

|

||||

FROM pytorch/pytorch:2.0.0-cuda11.7-cudnn8-runtime

|

||||

FROM pytorch/pytorch:2.0.1-cuda11.7-cudnn8-runtime

|

||||

RUN pip install --no-cache nvidia-tensorrt --index-url https://pypi.ngc.nvidia.com

|

||||

|

||||

# Downloads to user config dir

|

||||

ADD https://ultralytics.com/assets/Arial.ttf https://ultralytics.com/assets/Arial.Unicode.ttf /root/.config/Ultralytics/

|

||||

|

||||

# Install linux packages

|

||||

# g++ required to build 'tflite_support' package

|

||||

# g++ required to build 'tflite_support' and 'lap' packages, libusb-1.0-0 required for 'tflite_support' package

|

||||

RUN apt update \

|

||||

&& apt install --no-install-recommends -y gcc git zip curl htop libgl1-mesa-glx libglib2.0-0 libpython3-dev gnupg g++

|

||||

&& apt install --no-install-recommends -y gcc git zip curl htop libgl1-mesa-glx libglib2.0-0 libpython3-dev gnupg g++ libusb-1.0-0

|

||||

# RUN alias python=python3

|

||||

|

||||

# Security updates

|

||||

@ -19,7 +20,6 @@ RUN apt update \

|

||||

RUN apt upgrade --no-install-recommends -y openssl tar

|

||||

|

||||

# Create working directory

|

||||

RUN mkdir -p /usr/src/ultralytics

|

||||

WORKDIR /usr/src/ultralytics

|

||||

|

||||

# Copy contents

|

||||

@ -29,10 +29,22 @@ ADD https://github.com/ultralytics/assets/releases/download/v0.0.0/yolov8n.pt /u

|

||||

|

||||

# Install pip packages

|

||||

RUN python3 -m pip install --upgrade pip wheel

|

||||

RUN pip install --no-cache -e . albumentations comet tensorboard

|

||||

RUN pip install --no-cache -e ".[export]" thop albumentations comet pycocotools

|

||||

|

||||

# Run exports to AutoInstall packages

|

||||

RUN yolo export model=tmp/yolov8n.pt format=edgetpu imgsz=32

|

||||

RUN yolo export model=tmp/yolov8n.pt format=ncnn imgsz=32

|

||||

# Requires <= Python 3.10, bug with paddlepaddle==2.5.0

|

||||

RUN pip install --no-cache paddlepaddle==2.4.2 x2paddle

|

||||

# Fix error: `np.bool` was a deprecated alias for the builtin `bool`

|

||||

RUN pip install --no-cache numpy==1.23.5

|

||||

# Remove exported models

|

||||

RUN rm -rf tmp

|

||||

|

||||

# Set environment variables

|

||||

ENV OMP_NUM_THREADS=1

|

||||

# Avoid DDP error "MKL_THREADING_LAYER=INTEL is incompatible with libgomp.so.1 library" https://github.com/pytorch/pytorch/issues/37377

|

||||

ENV MKL_THREADING_LAYER=GNU

|

||||

|

||||

|

||||

# Usage Examples -------------------------------------------------------------------------------------------------------

|

||||

|

||||

@ -9,12 +9,12 @@ FROM arm64v8/ubuntu:22.10

|

||||

ADD https://ultralytics.com/assets/Arial.ttf https://ultralytics.com/assets/Arial.Unicode.ttf /root/.config/Ultralytics/

|

||||

|

||||

# Install linux packages

|

||||

# g++ required to build 'tflite_support' and 'lap' packages, libusb-1.0-0 required for 'tflite_support' package

|

||||

RUN apt update \

|

||||

&& apt install --no-install-recommends -y python3-pip git zip curl htop gcc libgl1-mesa-glx libglib2.0-0 libpython3-dev

|

||||

&& apt install --no-install-recommends -y python3-pip git zip curl htop gcc libgl1-mesa-glx libglib2.0-0 libpython3-dev gnupg g++ libusb-1.0-0

|

||||

# RUN alias python=python3

|

||||

|

||||

# Create working directory

|

||||

RUN mkdir -p /usr/src/ultralytics

|

||||

WORKDIR /usr/src/ultralytics

|

||||

|

||||

# Copy contents

|

||||

@ -24,7 +24,7 @@ ADD https://github.com/ultralytics/assets/releases/download/v0.0.0/yolov8n.pt /u

|

||||

|

||||

# Install pip packages

|

||||

RUN python3 -m pip install --upgrade pip wheel

|

||||

RUN pip install --no-cache -e .

|

||||

RUN pip install --no-cache -e . thop

|

||||

|

||||

|

||||

# Usage Examples -------------------------------------------------------------------------------------------------------

|

||||

@ -32,5 +32,8 @@ RUN pip install --no-cache -e .

|

||||

# Build and Push

|

||||

# t=ultralytics/ultralytics:latest-arm64 && sudo docker build --platform linux/arm64 -f docker/Dockerfile-arm64 -t $t . && sudo docker push $t

|

||||

|

||||

# Pull and Run

|

||||

# Run

|

||||

# t=ultralytics/ultralytics:latest-arm64 && sudo docker run -it --ipc=host $t

|

||||

|

||||

# Pull and Run with local volume mounted

|

||||

# t=ultralytics/ultralytics:latest-arm64 && sudo docker pull $t && sudo docker run -it --ipc=host -v "$(pwd)"/datasets:/usr/src/datasets $t

|

||||

|

||||

@ -3,19 +3,18 @@

|

||||

# Image is CPU-optimized for ONNX, OpenVINO and PyTorch YOLOv8 deployments

|

||||

|

||||

# Start FROM Ubuntu image https://hub.docker.com/_/ubuntu

|

||||

FROM ubuntu:22.10

|

||||

FROM ubuntu:lunar-20230615

|

||||

|

||||

# Downloads to user config dir

|

||||

ADD https://ultralytics.com/assets/Arial.ttf https://ultralytics.com/assets/Arial.Unicode.ttf /root/.config/Ultralytics/

|

||||

|

||||

# Install linux packages

|

||||

# g++ required to build 'tflite_support' package

|

||||

# g++ required to build 'tflite_support' and 'lap' packages, libusb-1.0-0 required for 'tflite_support' package

|

||||

RUN apt update \

|

||||

&& apt install --no-install-recommends -y python3-pip git zip curl htop libgl1-mesa-glx libglib2.0-0 libpython3-dev gnupg g++

|

||||

&& apt install --no-install-recommends -y python3-pip git zip curl htop libgl1-mesa-glx libglib2.0-0 libpython3-dev gnupg g++ libusb-1.0-0

|

||||

# RUN alias python=python3

|

||||

|

||||

# Create working directory

|

||||

RUN mkdir -p /usr/src/ultralytics

|

||||

WORKDIR /usr/src/ultralytics

|

||||

|

||||

# Copy contents

|

||||

@ -23,15 +22,28 @@ WORKDIR /usr/src/ultralytics

|

||||

RUN git clone https://github.com/ultralytics/ultralytics /usr/src/ultralytics

|

||||

ADD https://github.com/ultralytics/assets/releases/download/v0.0.0/yolov8n.pt /usr/src/ultralytics/

|

||||

|

||||

# Remove python3.11/EXTERNALLY-MANAGED or use 'pip install --break-system-packages' avoid 'externally-managed-environment' Ubuntu nightly error

|

||||

RUN rm -rf /usr/lib/python3.11/EXTERNALLY-MANAGED

|

||||

|

||||

# Install pip packages

|

||||

RUN python3 -m pip install --upgrade pip wheel

|

||||

RUN pip install --no-cache -e . --extra-index-url https://download.pytorch.org/whl/cpu

|

||||

RUN pip install --no-cache -e ".[export]" thop --extra-index-url https://download.pytorch.org/whl/cpu

|

||||

|

||||

# Run exports to AutoInstall packages

|

||||

RUN yolo export model=tmp/yolov8n.pt format=edgetpu imgsz=32

|

||||

RUN yolo export model=tmp/yolov8n.pt format=ncnn imgsz=32

|

||||

# Requires <= Python 3.10, bug with paddlepaddle==2.5.0

|

||||

# RUN pip install --no-cache paddlepaddle==2.4.2 x2paddle

|

||||

# Remove exported models

|

||||

RUN rm -rf tmp

|

||||

|

||||

# Usage Examples -------------------------------------------------------------------------------------------------------

|

||||

|

||||

# Build and Push

|

||||

# t=ultralytics/ultralytics:latest-cpu && sudo docker build -f docker/Dockerfile-cpu -t $t . && sudo docker push $t

|

||||

|

||||

# Pull and Run

|

||||

# Run

|

||||

# t=ultralytics/ultralytics:latest-cpu && sudo docker run -it --ipc=host $t

|

||||

|

||||

# Pull and Run with local volume mounted

|

||||

# t=ultralytics/ultralytics:latest-cpu && sudo docker pull $t && sudo docker run -it --ipc=host -v "$(pwd)"/datasets:/usr/src/datasets $t

|

||||

|

||||

@ -9,13 +9,12 @@ FROM nvcr.io/nvidia/l4t-pytorch:r35.2.1-pth2.0-py3

|

||||

ADD https://ultralytics.com/assets/Arial.ttf https://ultralytics.com/assets/Arial.Unicode.ttf /root/.config/Ultralytics/

|

||||

|

||||

# Install linux packages

|

||||

# g++ required to build 'tflite_support' package

|

||||

# g++ required to build 'tflite_support' and 'lap' packages, libusb-1.0-0 required for 'tflite_support' package

|

||||

RUN apt update \

|

||||

&& apt install --no-install-recommends -y gcc git zip curl htop libgl1-mesa-glx libglib2.0-0 libpython3-dev gnupg g++

|

||||

&& apt install --no-install-recommends -y gcc git zip curl htop libgl1-mesa-glx libglib2.0-0 libpython3-dev gnupg g++ libusb-1.0-0

|

||||

# RUN alias python=python3

|

||||

|

||||

# Create working directory

|

||||

RUN mkdir -p /usr/src/ultralytics

|

||||

WORKDIR /usr/src/ultralytics

|

||||

|

||||

# Copy contents

|

||||

@ -23,9 +22,12 @@ WORKDIR /usr/src/ultralytics

|

||||

RUN git clone https://github.com/ultralytics/ultralytics /usr/src/ultralytics

|

||||

ADD https://github.com/ultralytics/assets/releases/download/v0.0.0/yolov8n.pt /usr/src/ultralytics/

|

||||

|

||||

# Remove opencv-python from requirements.txt as it conflicts with opencv-python installed in base image

|

||||

RUN grep -v '^opencv-python' requirements.txt > tmp.txt && mv tmp.txt requirements.txt

|

||||

|

||||

# Install pip packages manually for TensorRT compatibility https://github.com/NVIDIA/TensorRT/issues/2567

|

||||

RUN python3 -m pip install --upgrade pip wheel

|

||||

RUN pip install --no-cache tqdm matplotlib pyyaml psutil pandas onnx "numpy==1.23"

|

||||

RUN pip install --no-cache tqdm matplotlib pyyaml psutil pandas onnx thop "numpy==1.23"

|

||||

RUN pip install --no-cache -e .

|

||||

|

||||

# Set environment variables

|

||||

@ -37,5 +39,8 @@ ENV OMP_NUM_THREADS=1

|

||||

# Build and Push

|

||||

# t=ultralytics/ultralytics:latest-jetson && sudo docker build --platform linux/arm64 -f docker/Dockerfile-jetson -t $t . && sudo docker push $t

|

||||

|

||||

# Pull and Run

|

||||

# t=ultralytics/ultralytics:jetson && sudo docker pull $t && sudo docker run -it --runtime=nvidia $t

|

||||

# Run

|

||||

# t=ultralytics/ultralytics:latest-jetson && sudo docker run -it --ipc=host $t

|

||||

|

||||

# Pull and Run with NVIDIA runtime

|

||||

# t=ultralytics/ultralytics:latest-jetson && sudo docker pull $t && sudo docker run -it --ipc=host --runtime=nvidia $t

|

||||

|

||||

49

downloads/ultralytics-main/docker/Dockerfile-python

Normal file

49

downloads/ultralytics-main/docker/Dockerfile-python

Normal file

@ -0,0 +1,49 @@

|

||||

# Ultralytics YOLO 🚀, AGPL-3.0 license

|

||||

# Builds ultralytics/ultralytics:latest-cpu image on DockerHub https://hub.docker.com/r/ultralytics/ultralytics

|

||||

# Image is CPU-optimized for ONNX, OpenVINO and PyTorch YOLOv8 deployments

|

||||

|

||||

# Use the official Python 3.10 slim-bookworm as base image

|

||||

FROM python:3.10-slim-bookworm

|

||||

|

||||

# Downloads to user config dir

|

||||

ADD https://ultralytics.com/assets/Arial.ttf https://ultralytics.com/assets/Arial.Unicode.ttf /root/.config/Ultralytics/

|

||||

|

||||

# Install linux packages

|

||||

# g++ required to build 'tflite_support' and 'lap' packages, libusb-1.0-0 required for 'tflite_support' package

|

||||

RUN apt update \

|

||||

&& apt install --no-install-recommends -y python3-pip git zip curl htop libgl1-mesa-glx libglib2.0-0 libpython3-dev gnupg g++ libusb-1.0-0

|

||||

# RUN alias python=python3

|

||||

|

||||

# Create working directory

|

||||

WORKDIR /usr/src/ultralytics

|

||||

|

||||

# Copy contents

|

||||

# COPY . /usr/src/app (issues as not a .git directory)

|

||||

RUN git clone https://github.com/ultralytics/ultralytics /usr/src/ultralytics

|

||||

ADD https://github.com/ultralytics/assets/releases/download/v0.0.0/yolov8n.pt /usr/src/ultralytics/

|

||||

|

||||

# Remove python3.11/EXTERNALLY-MANAGED or use 'pip install --break-system-packages' avoid 'externally-managed-environment' Ubuntu nightly error

|

||||

# RUN rm -rf /usr/lib/python3.11/EXTERNALLY-MANAGED

|

||||

|

||||

# Install pip packages

|

||||

RUN python3 -m pip install --upgrade pip wheel

|

||||

RUN pip install --no-cache -e ".[export]" thop --extra-index-url https://download.pytorch.org/whl/cpu

|

||||

|

||||

# Run exports to AutoInstall packages

|

||||

RUN yolo export model=tmp/yolov8n.pt format=edgetpu imgsz=32

|

||||

RUN yolo export model=tmp/yolov8n.pt format=ncnn imgsz=32

|

||||

# Requires <= Python 3.10, bug with paddlepaddle==2.5.0

|

||||

RUN pip install --no-cache paddlepaddle==2.4.2 x2paddle

|

||||

# Remove exported models

|

||||

RUN rm -rf tmp

|

||||

|

||||

# Usage Examples -------------------------------------------------------------------------------------------------------

|

||||

|

||||

# Build and Push

|

||||

# t=ultralytics/ultralytics:latest-python && sudo docker build -f docker/Dockerfile-python -t $t . && sudo docker push $t

|

||||

|

||||

# Run

|

||||

# t=ultralytics/ultralytics:latest-python && sudo docker run -it --ipc=host $t

|

||||

|

||||

# Pull and Run with local volume mounted

|

||||

# t=ultralytics/ultralytics:latest-python && sudo docker pull $t && sudo docker run -it --ipc=host -v "$(pwd)"/datasets:/usr/src/datasets $t

|

||||

@ -1 +1 @@

|

||||

docs.ultralytics.com

|

||||

docs.ultralytics.com

|

||||

|

||||

@ -1,5 +1,6 @@

|

||||

---

|

||||

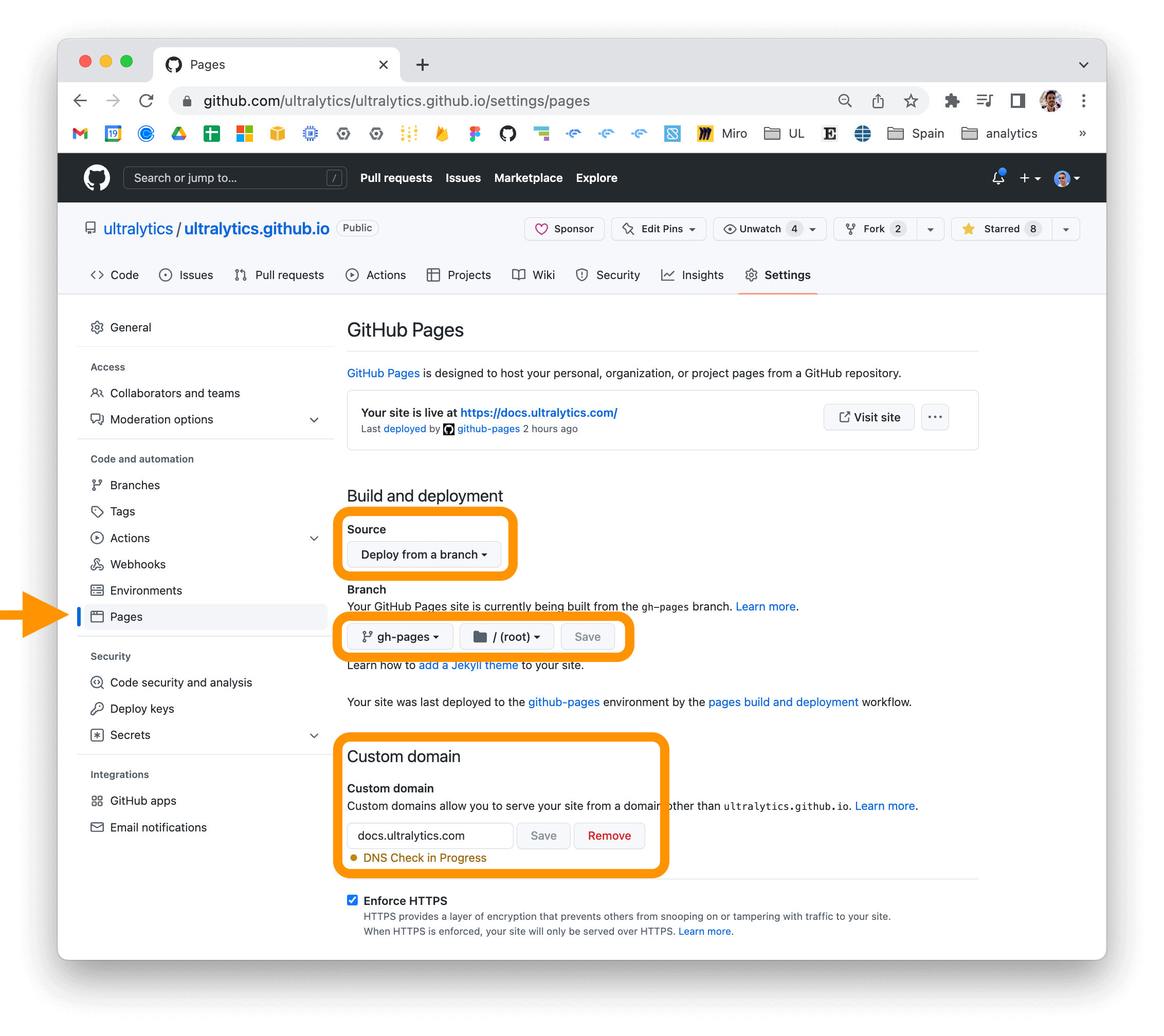

description: Learn how to install the Ultralytics package in developer mode and build/serve locally using MkDocs. Deploy your project to your host easily.

|

||||

description: Learn how to install Ultralytics in developer mode, build and serve it locally for testing, and deploy your documentation site on platforms like GitHub Pages, GitLab Pages, and Amazon S3.

|

||||

keywords: Ultralytics, documentation, mkdocs, installation, developer mode, building, deployment, local server, GitHub Pages, GitLab Pages, Amazon S3

|

||||

---

|

||||

|

||||

# Ultralytics Docs

|

||||

@ -26,7 +27,7 @@ cd ultralytics

|

||||

3. Install the package in developer mode using pip:

|

||||

|

||||

```bash

|

||||

pip install -e '.[dev]'

|

||||

pip install -e ".[dev]"

|

||||

```

|

||||

|

||||

This will install the ultralytics package and its dependencies in developer mode, allowing you to make changes to the

|

||||

@ -86,4 +87,4 @@ for your repository and updating the "Custom domain" field in the "GitHub Pages"

|

||||

|

||||

|

||||

For more information on deploying your MkDocs documentation site, see

|